DLSS 5: The Shape of Things to Come

Just because we can, doesn't mean we should.

Nvidia recently let us have an early look at DLSS 5. The bad publicity made me think about what the future of video games might have in store for us. It made me uneasy, so I want to share my view on it, plus a retrospective on upscaling tech and DLSS.

How the Fake Frames Came to Be

I think everyone who grew up with games before the 2010s had at least one misguided “oh my god this looks like real life, nothing can ever beat this”-moment. My first came rather late, but it informed my uninformedness about the lengths we’d go in pursuit of making games prettier.

Virtual Hydlide (1995), one of the earliest examples of a fully digitized photo-based main character. It’s literally real! (Source)

We came a long way from nearest neighbor scaling. Aside from their shortcomings, mostly as a result of analog image transmission protocols, CRT displays allowed for creative tricks to improve percieved image quality.

One famous example is from Castlevania: Symphony of the Night (1997) for the PlayStation 1. Notice how the smear on Dracula’s pupils expands the red dot to fill his eyes. (Source)

There are also blended dithering transparency effects documented, like in Sonic the Hedgehog (1991). We see the inherent blending properties help with reducing aliasing, smoothing limited color palette content, and making it appear as though there is more detail than there really is.

The Early Years

Since the inception of computer graphics, we’ve been trying to make them prettier and run faster. In offline rendering, early work on RenderMan in the 1980s introduced the concepts of anti-aliasing and supersampling, among many other advancements. The stochastic sampling technique developed for the REYES rendering algorithm (which RenderMan is an implementation of) is the ancestor of what we know as MSAA these days, sampling polygons at subpixel precision.

Since then, rasterized rendering complexity is a function of the amount of triangles, shader complexity and sampling resolution, and we’ve been finding different ways to work around limitations in each category. We decrease the amount of triangles through culling, LOD systems and impostors, or virtualized geometry systems like Nanite. We decrease shader complexity through shader variants and LOD systems, as well as many approximations in comparison to even shaders meant for offline rendering. Traditionally, there have been few substitutes for resolution, with most techniques being on a spectrum of brute-force.

The same desire to make offline 3D renders prettier and run faster also applies to 3D games of course. It’s pretty much the same thing, only in real-time.

The first instance of something akin to upscaling I found is in DOOM (1993) that offers a low detail mode, which naively doubles every rendered column of pixels, effectively only rendering at half the horizontal resolution.

In his awesome Game Engine Black Book: DOOM, Fabien Sanglard demonstrates the low detail mode. (Source)

Ever since then, gamers have been adjusting the output resolution to gain performance. CRT monitors being able to accept almost any resolution natively also made changing resolution easy and a bit less noticeable. Over the years we’ve been naively supersampling games by forcing to render at a higher resolution than our output resolution, commonly referred to as SSAA.

In Modernity

There have also been lots of developments on making games less jagged at the output resolution. I’ll just name-drop these: MSAA, CSAA/EQAA, MLAA, FXAA, TXAA and SMAA. Each technique works with more or less information about the 3D scene to produce an anti-aliased result, with varying visual quality and time complexity.

Not quite sure, but one of the first games with a dynamic render resolution to achieve constant performance was Wipeout HD (2008), which varies the horizontal render resolution between a predefined number of steps Digital Foundry have determined. The first game I came across that offers an internal render resolution scale setting independent of UI resolution in the menu was ArmA 2 (2009). Crysis (2007) has a console setting for it. Those were quite naive upscales though, and that’s what changed over time as shader complexity and triangle density grew quicker than common hardware could keep up with. Middle-earth: Shadow of Mordor (2014) was among the first games to popularize internal render resolution as a setting.

The advent of Temporal Anti-Aliasing (TAA) with Halo: Reach (2010) caused developments around using render resolution to manage render time in games to rapidly accelerate, in time with PBR shaders and more advanced usage of lighting and shadow techniques. While Halo: Reach primarily used its TAA solution to smooth over jagged edges without the cost MSAA or SSAA, it was soon refined and widely in use also to upscale.

Because the clock speeds of the CPU and GPU in the PlayStation 4 and Xbox One were unlocked and dynamically adjusting, dynamic resolution scaling went mainstream on the consoles. While not specifically necessary, the PlayStation 4 Pro brought a hardware implementation for checkerboard rendering, where every second pixel is rendered, and the rest filled in through interpolation - reminiscent in philosophy to DOOM. With Unreal Engine 4, we got TAAU as a mid-step that used a TAA as an upscaling solution, and further refinements to the TAA technique along the way.

In 2018, with the release of the Turing GPU architecture (RTX 2000), Nvidia pioneered upscaling using Machine Learning with Deep Learning Super Sampling, or DLSS. And the first attempt was… underwhelming. Nvidia overpromised and underdelivered, and yet it was a bit of science fiction. In short, a generic image enhancement model ran over the input frame to get rid of aliasing. Then, an upscaler used Nvidia-produced ground-truth frames from all kinds of different situations in a game to receive DLSS support, in order to improve the upscaling result. The more situations the upscaling model has been trained on, the more often you’d see a result that looks better than TAA.

In all other situations, it was markedly worse. Battlefield V (2018) was one of the games that received DLSS support early on, though still months after RTX 2000 graphics cards launched. See for yourself:

In a broad view we can see the DLSS-upscaled variant exhibits higher contrast in the tree leaves, where fine details are against a high-contrast background. (Source)

Zooming in we see ML model upscaling artifacts in the form of smearing that looks like anisotropic diffusion. (Source)

TechSpot’s and GamersNexus’ coverage shows some more comparisons and demonstrates that the performance difference is moderate, while the image quality difference is obvious.

What DLSS is and What it Isn’t

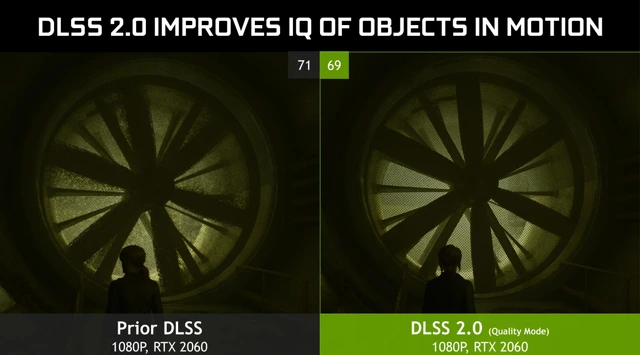

The rest is history. I was much more positive on the much-improved DLSS 2, which not only drastically increased in quality by using temporal data in addition to the spatial upscaling, but it also became game-independent. It wasn’t a matter of “will DLSS support this game” anymore - now it was “will this game support DLSS”. And that, paired with the quality improvements, made it viable and sometimes higher quality than TAA.

Then, the technology slowly drifted towards becoming a middleware suite, with an increasing number of features tacked on, much like GameWorks previously. The one most criticized until now must be Frame Generation.

When Frame Generation came onto the scene with DLSS 3, I was interested, but skeptic. My most positive read-between-the-lines on the marketing was that this is a way to prolong the service life of your graphics card. Not that Nvidia really wanted investors to think that way, but I thought that if it worked well enough, it could be a pretty killer feature. You give up some image quality, accept some artifacts and the added latency, and, given the games you want to play support it, you gain another year or two of use. On the other hand, if you have a high-end system and a high refresh rate monitor, you get to experience high quality raytracing earlier than your hardware would otherwise allow. At high refresh rates, the artifacts would presumably be less noticeable.

And, on a technicality, it holds up its end of the bargain. Especially with today’s DLSS versions, where the artifacts around UI elements, and ghosting behind objects that travel in world space, but stay static on screen, became better with time. But Nvidia’s DLSS Frame Generation simply can’t convincingly make a 4090 out of a 5070.

It’s not the technology itself that rubs me the wrong way, but the marketing. The main problem is that systems that would have the most use for Frame Gen can’t really use it. At a low native frame rate the added load on the graphics card and the resulting added latency and image quality degradation just don’t make a good enough experience. The effect is exacerbated by DLSS 4’s Multi Frame Generation, so this is demonstrated time and again, and again. So in reality it’s more of a value-add for high-end buyers and a stopgap for ever longer product generation lifecycles.

So, as an option, it’s good. I’m all for options! PC gaming is all about options. But its mere existence is a reflection of the price/performance stagnation in the middle and lower product segments.

Can The Frames Get Even More Fake?

DLSS 5 is a whole different beast. This is a video-to-video model that can enhance certain parts of the image it recognizes, in real-time.

On first glance, environments are treated to much more grounded and realistic looking lighting. Like here in Hogwarts Legacy (2024). Notice how the stack of cauldrons in the middle of the frame now gets a neat diffuse reflection. (Source)

Nvidia’s Zorah Unreal Engine 5 demo is also shown off. It also seems like an upgrade, but the adorned wooden board beneath the awning on the left should be in shade. A limitation of the screen-space technology that doesn’t get depth information about the 3D scene. (Source)

As demonstrated, these enhancements can go very far, like completely relighting a scene, changing character’s faces, and changing the post-processing. It is extremely impressive that it’s even possible to run this in real-time, even if the demos ran on two RTX 5090s. But I’m very uneasy seeing it happen. There’s just so many implications.

What I am absolutely sure about is that it’s overstepping with its infringement on artistic vision and coherency. Take a look at this:

This is the controversial shot of Grace Ashcroft from Resident Evil: Requiem (2026). Ignoring her for a moment, we lose parts of the fog in the background to contrast enhancement. Notice how the fog is still there between the light pole and the column of the subway tracks to the left. (Source)

Zoomed in, you can notice the reshading of her cheeks, wider eyes and the fuller lips. The reshading on her hair is absolutely amazing though. Also her eyes are slightly different from each other now.

I wonder if Grace’s face would look different from the side than from the front, based on the model’s training data. Or if the model would even recognize the side-profile as a face to apply the beauty filter to.

Anyway, DLSS 5 is also overstepping what the DLSS brand stood for until now - and not in a good way. Even though it already got diluted by the tacked-on supplementary features, the DLSS suite was still squarely in the same business as other anti-aliasing technologies: Get rid of jaggies, make game go faster. It’s about image quality and performance enhancements on a purely technical level. And DLSS 5’s features, as demonstrated, just ain’t that, plain and simple.

And that is completely ignoring the name: Deep Learning Super Sampling. DLSS 5’s new features should be called something else and live under a different middleware package. Like RTX Neural Rendering as part of RTX Kit for example.

But, for the sake of the argument, let’s say we’re super happy with the results and really wanted Neural Rendering to happen. What’s wrong with DLSS 5 on a technical level? In its demonstrated form, it’s just not at all what I made it out to be, and what I wanted out of it.

What I Wanted Out Of It

Now, to my disappointment, as far as I can tell, DLSS 5 has little to do with the RTX Neural Rendering features Nvidia showed off alongside the announcement of its Blackwell GPUs. The only obvious parallels are RTX Neural Faces and RTX Hair, which are really impressive from a technological standpoint in the stability of the output and the grounding to the input image. They just seem wildly misplaced in the gaming space.

And for me it’s not only because of the yassification - I can imagine that may actually be adjustable by developers in some respects. For me, it’s mostly the uncanny valley. And that is because we lack the animation tech to make the photorealistic faces look coherent with the way the bodies they’re attached to are animated. The Starfield (2023) demos look decent enough in still images, but in motion the result suffers from exactly the uncanny valley effect it was supposed to fix.

Not only do I find this overprocessed look hard to look at (notice the glow around the two main subjects), but I’d say the local contrast enhancements are a downgrade to the groundedness of the lighting overall. The other demos generally do make Starfield look better in stills though. (Source)

But it breaks down in motion. That’s the uncanny valley effect I mean. Starfield has very optimized animations (i.e. keyframe reduction) for performance reasons and the lipsync isn’t motion captured for every single line of dialogue.

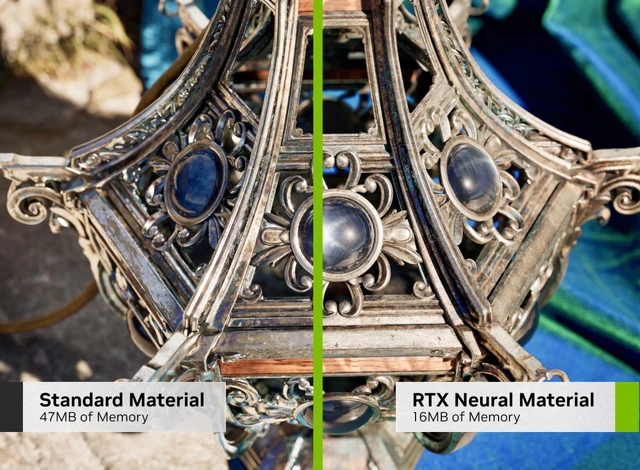

I didn’t really see the other RTX Neural Rendering features represented in DLSS 5’s demonstration. I was especially excited about the prospect of Neural Shaders and Neural Materials. My idealized dream version of it is this:

Basically how I want Neural Shaders and Materials to be handled. (Source)

Developers could define materials as neural-rendered in the engine, then the engine would make two passes on the frame. One with either a plain shader to store information on the material’s properties, or with a traditional shader that approximates the effect, and then one pass where the ML model would take that information to inpaint the pixels precisely where the neural material is on screen, using depth, normals, lighting info, motion vectors, and whatever else it needs. We would basically traditionally render the scene, and use neural rendering to do things that are impossible or very hard to do in real-time. For example materials like silk, or having the power of Substance Designer/Painter in a neural material that auto-adapts to the shape of meshes. I hoped we could get rid of ugly tiled ground textures, and train the ML models on real-world materials and objects. All while using less memory.

Nvidia demonstrate as much in a research paper about it. The team states “we hope this article will stimulate adoption of small neural networks in real-time rendering”, citing Neural Shaders “outperform optimized GPU implementations”.

Making the iridescent cloth material is possible using a multi-layer shader. But it’s no easy feat. Especially if it has to look good from afar and up close.

I imagined Neural Shaders and Neural Materials could become useful enough to define a new open standard on the level of programmable shaders, become integrated into graphics APIs, and drive computer graphics forward for everyone. In the DLSS 5 press release, Jensen is quoted: “Twenty-five years after NVIDIA invented the programmable shader, we are reinventing computer graphics once again”.

But, alas, DLSS 5 is not a reinvention of computer graphics. We got a really nice and proprietary beauty filter instead. A screen-space beauty filter at that. One that makes frames slower to render, not faster.

All in all, I don’t think the gaming space needs this in its demonstrated form. Rather than this, I think we could use more efficient animation technologies, and advancements in character physics. The characters Metahuman produces look convincing enough on a 3D model and material level. We don’t need to superimpose an AI slop filter on top of them. We need more powerful hardware to use such high-poly models with advanced rigs everywhere, and cheap high-quality motion capturing tech to have believable animations produced by anyone run on any hardware.

Only very recently have real-time subcutaneous muscle simulations started to crop up, but not in shipping products yet as far as I know. One example is a short The Witcher 4 demo in a State of Unreal presentation:

Unreal’s Chaos Flesh Muscle Simulation introduced with Unreal Engine 5.5 seems like a pretty brute-force way of doing it, but it’s certainly one way. Currently, I find it hard to imagine to apply that system to face muscles for very precise skin deformations at scale. But I do think there’s lots of untapped potential there with ML-based vertex or fragment shaders trained on producing specific results from certain arrangements of bones and their relations to each other.

Where Does It Go Next?

With DLSS 5, the fake frames have reached new heights of fakery. And as anyone could have predicted, this resulted in another blowback for Nvidia. Jensen’s universally criticized “they’re completely wrong” goes to show that, at least at his level, the reaction was wholly unexpected.

And I kinda get it. The tech is genuinely cool, despite its inaccuracies and all, and it is using the inference hardware to its fullest. Maybe if I was in the thick of things developing it, I also would’ve been blindsided by the reaction.

But from the outside looking in… To me, this is a case of just because we can, doesn’t mean we should. I imagine the tech has much more useful applications than imposing generic beauty standards on the output image.

The internet’s exaggerated reaction was warranted in that it unmistakably demonstrated that the product isn’t where it needs to be. I had my share of fun with DLSS 5 Anything and the memes. But in reality the effect in the demos was likely cranked to 11 make it really apparent. Surely teams with a bit of artistic intent could use it more tactfully.

And that leaves this lingering feeling of irresistibility. An aftertaste so sweet and yet so dangerous.

The implications on the games industry as a whole - it’s a whole different story. With over 94% market share on sales, Nvidia has a monopoly in the consumer space. Unfortunately, it’s even lauded for it. Whatever Nvidia pushes has a high chance of being adopted, simply because of market penetration.

The Shape of Things to Come

So if the beauty filter becomes reality, at one point or another there’s going to be enough cards out there able to run it. Either that, or gaming will transition to cloud subscriptions and real-time streaming to thin clients, and we’ll own nothing and be happy. The GPUs in the cloud will surely be powerful enough to run the thing.

I fear with time we’re going to find ourselves in the same situation as with the Lego Batman: Legacy of the Dark Knight (2026) example above. Today it’s reliance on technical enhancements, and tomorrow it’s going to be cosmetic enhancements.

There’s nothing inherently wrong with that, depending on how purist you want to be about it. But DLSS 5’s critics correctly identified that it tends to make the output look more same-y. And that’s the actually scary part I think. It’s more same-y and more pleasant. Like how camera apps on smartphones heavily post-process the output, mostly to our delight.

In any case, Nvidia allowed us this glimpse into the future, where lacking competition and increasingly expensive advances in chip design and manufacturing result in ever more price/performance stagnation. Where the reliance on such fakery will continue and likely increase. And we’ll slowly grow fond of it.

I don’t believe DLSS 5’s arrival is going to change anything in the short to mid-term - especially for low and mid-range gamers. But long-term I can see small productions gaining in image quality overall, large productions attempting to save a buck using it, and us getting further and further away from the art and craft of making video games. I see a divide happening where “homegrown” AI-less games stand out in a sea of sameness, fans and critics on both sides and all.

And that’s not a future I want to witness for video games. I wish the cosmetic enhancements of DLSS 5 will never grow on us in the way DLSS and Frame Generation did.